Story Generation with Generative AI

A fun project I’ve been playing with (like so many other developers working with generative AI), is a game master that relies on generative AI to create content. The potential for a text and image-based large language model to create creative content has already been demonstrated multiple times (and is a subject of heated debate), but I’ve always thought that harnessing that ability within a game framework has a lot of potential, and I’ve started work on a project to prove that out.

A game master, for those unaware, is someone who steers the story and environment that other Player Characters interact in, in a game style commonly referred to as a Tabletop Role Playing Game. Dungeons and Dragons is by far the most popular type of TTRPG, but there’s thousands of other systems both big and small that have been created by a thousand different designers. The GM’s main roles are to apply the game system’s rules, but just as importantly, provide a story framework for the player characters to explore.

It’s easy enough to ask a large language model like ChatGPT to generate a story for you - where I think the trick is, is adding that game system to help guide what kind of story and prompts are used in the generation. Recently I’ve been playing with the Mythic GM System, a system meant to serve as the game master in any RPG so that you can play a solo game. The Mythic system provides a framework that effectively serves as a prompt generator to allow for players to imagine their own twists and turns to the story they’re engaged with - which is a perfect fit for something that can generate prompts that can be used by an LLM!

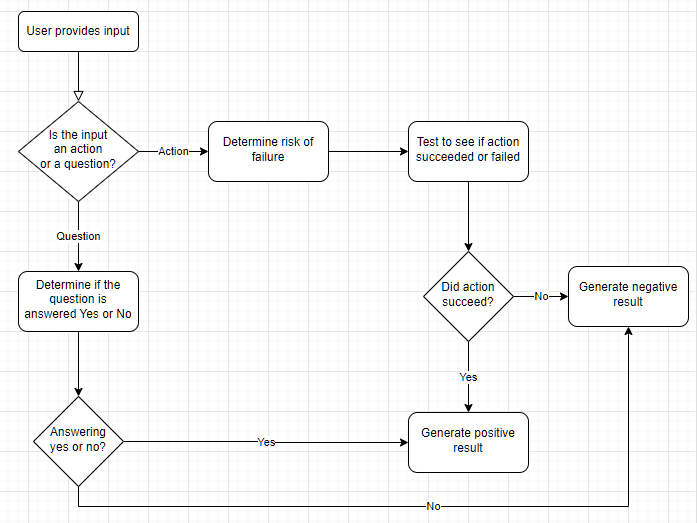

The basic algorithm we’re working with here is as follows:

This isn’t much different from how a game master would think in any standard RPG - if the thing the player character is trying to do has any element of risk or chance of failure, a game master usually relies on the TTRPG’s game rules to determine if they were successful. This is generally based on a player’s stats / equipment / the scene they find themselves in / etc. Otherwise, players are generally asking about their environment: “Is there anything in the room I can barricade the door with?” “Does the bartender seem upset?” “How cold is it outside?” All these questions generally rely on some sort of judgment call from the game master on how to answer.

Where the Mythic GM system comes in is providing a framework to make these judgment calls. Based on the current “chaos factor” and the likelihood of the question’s answer being positive, they give you odds that you roll against, which in turn prompts you to continue the story as if the question had been answered “Yes” or “No”. As an example, something that is “likely” to happen in a particularly chaotic scene might have a 65% chance of succeeding - I’d roll two 10-sided dice, and see if I got a value lower than 65 to proceed down that “positive” story thread.

When using the system in person this still requires a number of judgment calls on behalf of the user, which (until recently) generally prevented these types of systems being used programatically - a user could ask potentially any question, and it’d be really hard to code something that would be able to interpret any input accurately. This is why in video game RPGs you generally are presented with a few specific options when it comes to character dialogs or story choices - it’s easier for a developer to code for outcome 1 of 3 rather than a free form text field.

But this is where the power of LLMs can come into play! LLMs inherently understand the context of the language they’ve been trained on, and with some prompt engineering we can determine things like the risk of a player’s free form action in a programmatic way.

Assistant: As you take in your surroundings, a strange sense of unease begins to creep in. You notice that the hotel is unnaturally quiet apart from those sporadic sounds. The door to the hallway seems ajar, barely sealing what could be a growing chaos beyond, and the hallway light flickers ominously. The feeling of isolation weighs heavily in the air, and you can't shake the instinctual urge to investigate what has happened outside your temporary refuge.

System: Determine the odds of the user's requested action having a positive result. Return only a json integer between 0 (Certain) and 8 (Impossible).

User: I get up and peer out into the hallway through the crack in the door

Assistant: {"odds": 3} //this corresponds to "likely"

A few things to note here. A mid-conversation system prompt is a technique I’m trying that allows me to modify the original intent of the conversation history so far. This way I can take everything that has happened between the LLM and the user and use it as context for a different operation - I’m no longer asking the LLM to generate the next paragraph of the story, but instead asking it to classify the risk of the user’s input. I don’t save the new system message or the classification output to the ChatHistory object, instead effectively making a separate snapshot of the conversation so far to use it as context for the classification decision. This has worked really well in my testing so far.

I’m also using a feature specific to the gpt-4o-mini model, asking it to return json_format as a response (this is done in code). This constrains the model’s output to parseable JSON which is much easier and more consistent to work with than asking the LLM nicely to return a string you can parse and hoping for the best. Now, things that used to be solely in the human domain (making judgment calls about the story and the user’s questions and actions) can be classified and acted upon using code, which opens this entire problem space up to software-based solutions.

The other issue we can address with a system like this is spontaneity. A large language model won’t randomly decide to make a user’s action succeed or fail, or inject a random event into the story - it’s just going to do whatever it’s prompted to do. If we approach this from another angle - we can modify the prompt to tell the LLM to do those things based on our own code.

Let’s say using the number parsed from the difficulty classification above, we randomly decide that the user’s attempted action fails:

Assistant: you spot several items in the room that could help reinforce your barricade. The desk is sturdy and relatively heavy, making it an excellent option to push against the door. Additionally, there are a couple of wooden chairs that can be used to wedge against the door handle or stacked against it for extra support. A bedside table also stands beside the bed. With these items at your disposal, you can create a more secure barrier and buy yourself some time in this precarious situation.

System: The user's next action will fail. //this was determined using code

User: I push the desk against the door to create a barricade.

Assistant: With a determined effort, you grip the edge of the desk and begin to shift it across the carpeted floor, straining against its weight. As you heave it closer to the door, the legs scrape along the ground, generating a harsh noise that cuts through the silence like a knife. The desk finally slams against the door, and you feel a momentary rush of relief as it fits snugly into place. However, just as you step back to admire your makeshift barricade, the pervasive quiet is shattered by a series of heavy thuds echoing from the hallway.

Using the same approach as above, we inject a new system prompt telling the LLM how the next action should resolve (negatively), and then allow the LLM to continue the story in that context. Without that clue, the LLM would’ve continued on its merry way, never giving the user any bad news.

You can imagine extending this to integrate further with a TTRPG system, incorporating the user’s skills (maybe they’re good at running and terrible at negotiation), their health (“You’re too weak to attempt that action”), their equipment (having a cellphone on you would make contacting the police more likely), and so forth. I haven’t extended those functions to my app just yet, but even with this basic framework in place I’m able to have some pretty interesting and detailed adventures. Check the link below for an example transcript!